Design & Implementation of Mobile Analytics

There is a better way to make your mobile analytics far more effective and to dramatically reduce your integration time!

Further, I illustrate how the best person to manage analytics not only understands metrics but also has a good product sense and should have a deep understanding of the mobile app itself.

Read on...

BACKGROUND:

I've had a really great opportunity since the beginning of this year to work with 5 different mobile gaming companies on various short term consulting projects typically around mobile game monetization and optimization. Without breaking my confidentiality agreements I can say that these companies ran the gamut in genre (from super casual to hard core) and success (top 10 to floundering).

Most of the companies we worked with were fairly well resourced; a typical characteristic of a company able to afford external consulting fees. Surprisingly, analytics and reporting were almost always an after-thought and left to the very end by all of the companies we worked with.

In a couple of cases, I would see a massive list of metrics in some excel spreadsheet or Google doc. However, once the products went live we found that most of the analytics weren't instrumented. Hence, we were left with just the typical monetization and retention KPIs that come standard off of most third party dashboards.

DESIGN:

Let's begin with the design of your analytics and what you should be thinking about.

First of all, analytics should start at the very beginning of game development. Right after a game design document of some kind is completed you should start by building some hypotheses about the app.

We start by developing key hypotheses on what will determine success or failure for the app and what are the key risks that can kill us. In addition, we should define the objectives for the app. Think of this as an initial "MVR" (Minimum Viable Reporting) set of the most critical measures to help us validate the hypotheses.

So ask yourself the following key questions:

Bases of Competition: What are the key bases of competition for this app? What do we think will determine success or failure for us? Why will a user pick this app over another similar app?

Example: If this is a branded card battle game with a +1 of employing a turn-based battle system then potential ways of thinking about the success or failure of the game would be:

Brand: Strength of the brand to lower user acquisition costs

Improved (hopefully) Gameplay: How well turn-based battle resonates with users relative to standard automated async battle systems

Key Risks: What are the top 3-5 risks in this game? This can coincide with the +1 but should also include any other key risks for the product

Monetization/retention?

Game design?

Platform or technology risk?

Server side stability/performance (especially for games with real time component)?

User reaction to a new feature?

Content capacity/ability to scale content?

etc, etc

Objectives: How do we measure success? For most companies this should be profitability but as we've seen in the current market there are other objectives for some larger companies.

Profitability?

Reach/Ranking?

Revenue?

So let's go through a hypothetical example.

Hypothetical Mobile Game Product: Justice League Rush

Concept: Take a tower defense game like Kingdom Rush Frontiers gameplay and +1 it

+1 design parameters:

Brand: Branded gameplay using DC Comics/Warner Bros Justice League brand

Hypothesis: The Justice League brand will 1. enable a significant Apple feature, 2. dramatically decrease overall user acquisition costs, and 3. generate significant organic user traffic so long as the game charts

Multi-hero: Increase focus on heroes by enabling user to control up to 3 heroes at a time

Hypothesis: Multiple hero control will motivate users to spend more money on hero upgrades/items and cause users to invest more emotionally into the heroes in the game without significantly reducing gameplay time, sessions, etc. due to increased micro (Note: I don't actually believe this).

Social: Promote greater player to player and friends interaction by enabling user to call reinforcements from a set of friends similar (somewhat) to what's done in Puzzle & Dragons

Hypothesis: We should see a significant increase in user retention based on light user interactions, increased notification activity, and messages/other social activity.

Hybrid Payment Model: Use a Candy Crush Saga style payment model where the game is initially F2P but then add hard gates every 15-20 levels or so gated by payment or social.

Hypothesis: This model will dramatically increase overall game revenue vs. a paid model as we expect a high payer conversion rate (the Candy Crush Saga effect). This model should also complement social features to help increase the overall user base.

Whether you believe the hypotheses or not, you should stake a claim one way or the other and test it. Now that we have defined the game concept let's proceed with the key questions:

Bases of Competition: The key differentiating aspects of the game should be the Brand and Payment model. Having said that, let's step through each of the +1 features here and develop some views and test metrics around these features:

Brand:

Justice League brand should drive a large number of organics:

Test: Organics per day (and by chart position), Organics as a % of installs

Justice League brand should increase CTR for ads. Potential to shift UA focus to CPC rather than CPI:

Test: mobile ad CTR, CPI of our CPC campaigns

Justice League brand should reduce overall user acquisition costs:

Test: measure eCPI relative to other games

Multi-hero:

Usage of multiple heroes will compel users to want to upgrade more heroes and emotionally attach themselves with the heroes more leading to increased monetization for heroes

Test: Check ARPDAU contribution for hero upgrades/items. Fish around for similar numbers from other games to compare with.

Increased micro will not decrease game play usage. This should actually be a fairly major concern as I strongly believe that micro usually doesn't work for mobile games.

Test: Avg sessions, Avg session length, Time spent in app per active user, d1/7/30 retention

Overall monetization should increase (without a corresponding decrease in retention or usage)

Test: Overall ARPDAU, LTV

Social:

Social features will increase retention of the game by: 1. generating additional notifications that people actually want to see, 2. create social connections with other users, 3. create rivalries

Test: d1/7/30 retention, notification click through %, notifications clicked per user, social invitations sent per user, % paid vs. social hard gate unlock, number of friends per active user, etc.

Hybrid Payment Model:

An incremental paid app model like Candy Crush Saga should increase overall monetization relative to paid or purely free for this kind of a skills based game

Test: Should weigh ARPU, social installs from hard gates, and user expansion (by being free) x ARPU relative to a paid only model e.g., comparison to Kingdom Rush Frontiers.

Key Risks:

Multi-Hero Feature Micro: Concern over whether too much micro in a mobile game can create a poor mobile game experience for the user

Covered above

Brand Transfer: Concern that the Justice League brand translates to a tower defense game and whether the audience for Justice League transfers well to a tower defense genre.

Test: D1/7/30 retention, session time, # sessions relative to other tower defense games. Actually this risk should not be addressed by analytics after the fact but we should try to measure it nonetheless.

Level Design, Balancing & Tuning: Concern that the success of games like Kingdom Rush Frontiers stems from having a well balanced game with interesting level designs.

Test: Progression funnel by map, days to map (e.g., how many days does it take to get to map 1, 2, 3, etc.), sessions per map

Objectives:

Profitability: Which means eCPI < LTV

Monetization/LTV proxies: d1/7/30 first time buyer conversion, 30 day ARPU, ARPDAU, % Paying user

Retention: d1/7/30

UA: CPI, organics/day (and by chart position)

This set of analytics should comprise the key set of initial analytics that we develop.

Further, however, we should define other analytics based upon specific applications. For example:

Audit

User Acquisition

Optimization

Anti-Hacking

This process is described in an earlier post of mine here: Introduction to Mobile Analytics & Reporting.

IMPLEMENTATION:

With the analytics design complete, we are now ready for implementation of the analytics.

I've seen analytics integration efforts take weeks. This shouldn't be the case.

There are 2 tools that I've developed that I believe significantly helps aid developers and analytics managers to get on the same page and reduce time spent in coordination and integration:

An Application/Role/Metrics table (or just "ARM Table") as described in my earlier post

This table should provide an overall roadmap to developers about what needs to be instrumented and reported by application and by role (e.g., specific dashboard views)

An Analytics Events & Properties table which I describe below

This defines what needs to be instrumented and helps translate between coders and analytics managers

The Analytics Events & Properties Table (or just "EP Table") should just contain the following information:

Event Name | Properties | Code Name (The name used in code and sent up to the analytics service) | Notes

Here is an example of such an EP Table that an analytics manager would fill out and then hand over to dev:

Link to Example EP Table

The overall process should work like this:

Hand-off: The analytics manager (or whoever is responsible for analytics) defines the ARM Table and EP Table and hands off to game developers

Instrumentation: Developers will study the tables and instrument game code as they are working on the code and:

Instrument third party analytics where possible based on the EP Table and fill in the EP Table

Create proprietary reporting tools based on whatever can't be handled by third party analytics

Configuration: The analytics manager will take the completed EP Table back and complete configuration and dashboard views on third party analytics sites. Further, check any reporting tools to make sure that all reporting is accurate and complete.

Once the analytics manager has the EP Table back configuration of analytics is easy. Event names and property names are well defined and no need to go bother a developer to dig through code to figure out what an event or property was called in code.

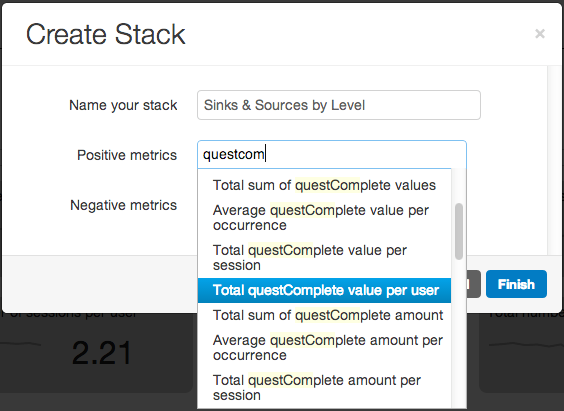

Analytics Configuration Screenshot (From Leanplum):

Following this methodology should hopefully accomplish the following primary goals:

Focus: Help focus team on key analytics that are the most relevant to game success/failure

Reduce Time: Reduce integration and coordination time between PMs/producers/analysts and developers taking integration time from weeks to days or even hours

Day 1 Launch: With the planning ahead of time and integration of analytics during development, reporting should be available from day 1 when the app launches and people don't need to be scrambling to figure things out after it's too late

Good luck! If you have a better way of doing this please let me know.